I’ve been thinking a lot about how we use AI in customer support, and I keep coming back to the same point: the goal should be a better product experience, not just lower support costs.

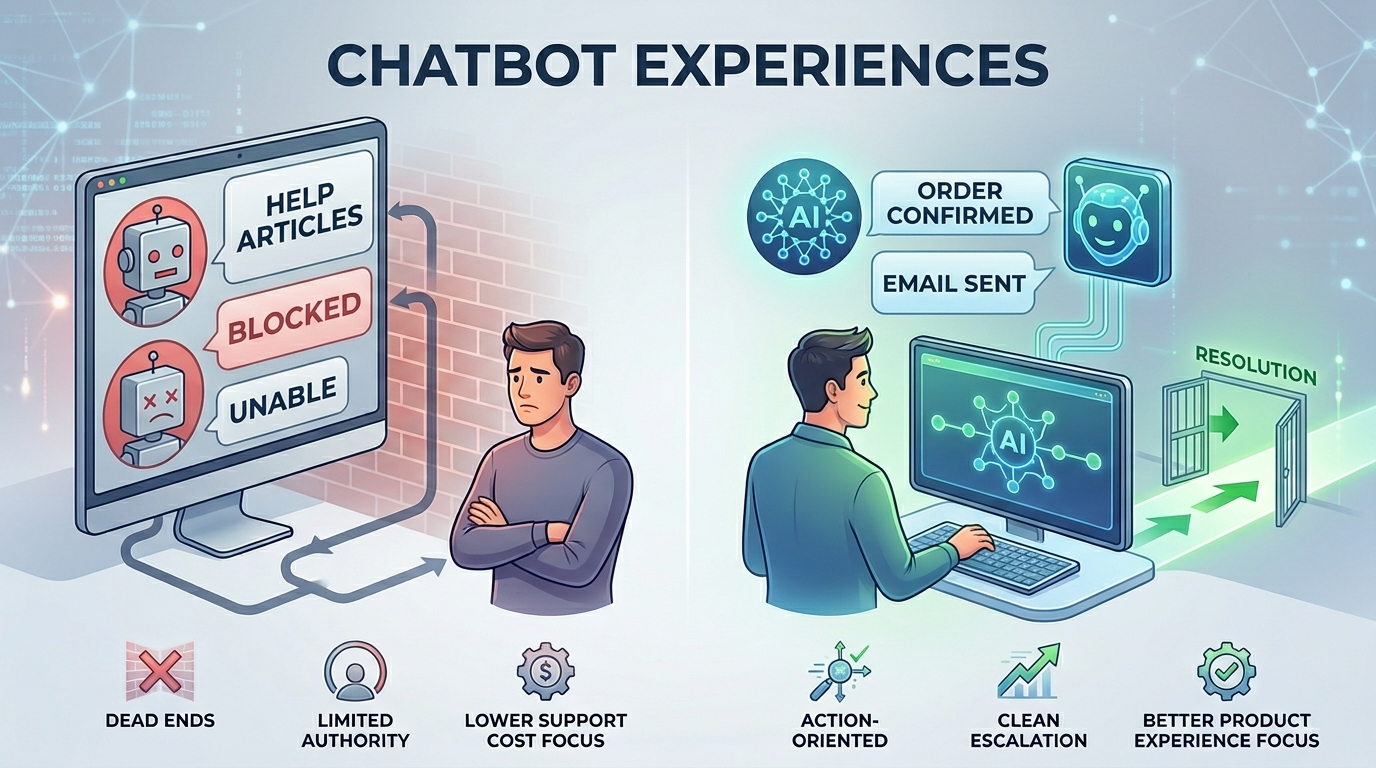

I had two chatbot experiences recently that made the difference really clear.

Chatbot #1 (banking)

I went to my bank’s chatbot as a last resort. I had already searched their help docs and tried the usual fixes, but I still couldn’t resolve the issue. I expected the bot to either take action or route me to someone who could.

It couldn’t do either.

It had no authority to fix anything and just repeated the documentation back to me. There was no escalation path, no option to bring in a human, and no way to move forward once the script stopped matching my situation. I was basically stuck in a loop.

And that’s been the pattern in a lot of my chatbot support experiences. They’re fine for basic “where do I find X” questions, but the moment you need an exception, account-specific help, or an actual decision, they fall apart. At that point you don’t feel supported, you feel blocked.

What stands out to me is that the failure often isn’t the AI. It’s the product decisions around it. A chatbot can be as polished as you want, but if it can’t do meaningful work and it can’t escalate cleanly, it becomes a wall.

Chatbot #2 (e-commerce)

Completely different outcome.

I ordered a product online and lost a part to it. I reached out to support and got a chatbot. It acknowledged the issue immediately and asked for my address. After I provided it, I received an invoice for the replacement part within a few minutes. That was after only two messages total.

Fast, direct, and focused on resolution.

And the interesting part is that it didn’t feel “smart” in a flashy way. It felt connected. It had the authority to complete a workflow. It could move the problem forward.

What I took from this

A lot of companies measure chatbot success by containment, deflection rate, or “tickets avoided.” That might look good internally, but customers measure support with one metric: how quickly the problem gets solved.

If a bot can’t take meaningful action, the next requirement is simple: it needs a clear, built-in escalation path to a human, with context. Not a dead end. Not “try this article.” A real handoff that includes what the customer already tried, what the system knows, and why the bot is escalating.

Also, if the bot’s primary job is paraphrasing help docs, that’s not support. That’s search. Search is useful, but it’s not the same thing as resolution, especially in high-trust categories like banking where a dead-end experience does real damage.

This is where I think AI can genuinely make products better:

- Give it authority to execute common workflows, not just answer questions

- Design escalation as a first-class feature, not a last resort

- Measure outcomes like time-to-resolution and first-contact resolution

- Reduce friction and dead ends, not headcount

When AI is implemented this way, customers don’t think “I talked to a chatbot.” They think “that was easy.” That’s the bar, and that’s what Zeytech’s AI Services strive to deliver.

Let’s Talk

Want to talk more about your experiences, good or bad, or want to look at how Zeytech can help build or implement a chatbot for your business that users will appreciate?